Agentic Disillusionment Is Real: The Full-Stack Observability Fix for 2026

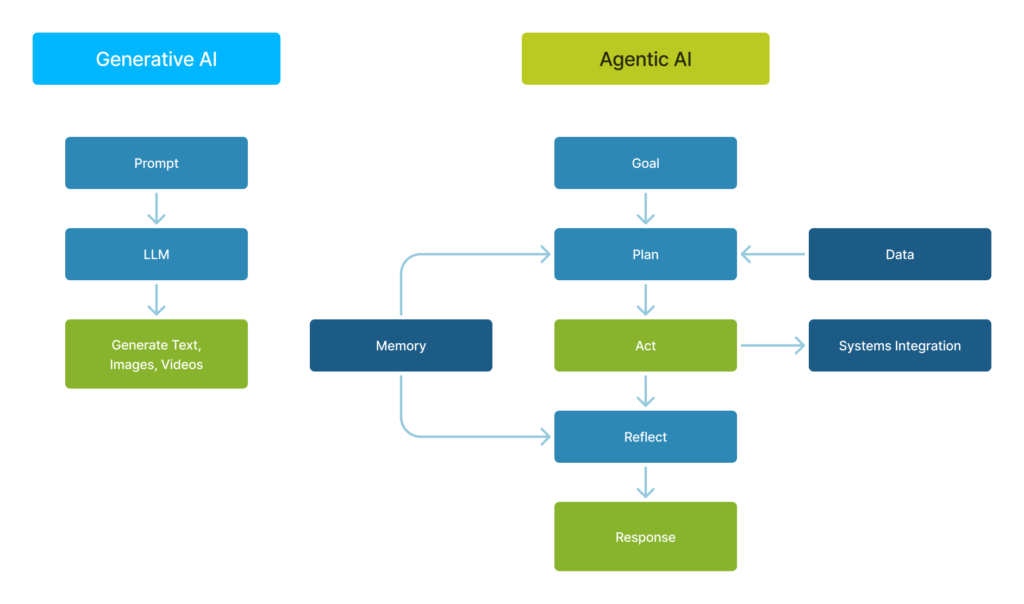

The year 2025 was a turning point for agentic AI in the enterprise. Organizations invested in autonomous agents with the expectation that these systems would operate across complex workflows with less human intervention. However, while early pilots showed promise, production environments encountered friction. As a result, “agentic disillusionment” set in. This happened because large language models performed exactly as designed; the failures occurred at the system level. In addition, most enterprise technology stacks were never designed to support autonomous execution. Legacy request-response architectures, human-centric observability, and context-poor data layers proved incompatible with agentic workflows.

In 2026, AI agent infrastructure will be defined less by model intelligence and more by the systems that surround it. Enterprises moving beyond experimentation must treat observability, execution resilience, and decision auditability as first-class infrastructure concerns. The following sections outline a practical 2026 observability fix list and three foundational changes required to make autonomous agents traceable, debuggable, and auditable in production.

Technical Root of Agentic Disillusionment

The phenomenon of agentic failure in Large Language Models (LLMs) is primarily due to a dichotomy between their probabilistic nature and the deterministic demands of business workflows, leading to a “reality gap.” This issue often manifests as “agent washing,” where simple chatbots are rebranded as sophisticated agents. The underlying technical causes of agentic failure are identified as follows:

- Brittle Tool Integrations and API Failures: Agents struggle with poorly documented or fragile external system integrations. This situation arises when tool schemas conflict with the model’s prompts, resulting in failures like unhandled pagination issues or inefficient search outputs, which can lead to execution crashes.

- Lack of Observability and Traceability: The opaque nature of agentic systems complicates the debugging process, with failures often untraceable due to the absence of structured logs. This opacity results in reactive responses rather than proactive solutions when an agent malfunctions.

- Context Window and Memory Degradation: Agents may lose continuity in long conversations, as they exceed their context memory, causing them to forget earlier directives or preferences. This degradation in memory can lead to significant drops in performance after just a few interactions.

- Flawed Task Decomposition and Planning: Agents often misinterpret tasks, resulting in challenges like looping, overspending, or violating constraints. This mismanagement hampers the ability to break down complex goals, contributing to a majority of pilot failures.

- Non-Determinism and “Silent” Model Drift: LLMs do not guarantee consistent outputs for identical inputs due to their stochastic nature. This issue becomes exacerbated with model updates or shifts in data patterns, often leading agents to fail in real-world applications despite prior success in testing.

- Security & Governance Vulnerabilities: Agents can be susceptible to malicious inputs that alter their operational instructions, increasing the risk of security breaches and unauthorized operations.

- Legacy System Incompatibility: The integration of advanced agents with outdated legacy systems, which do not support dynamic interactions, can hinder the agents’ operational effectiveness, causing delays or failures in task execution.

The 2026 Observability Fix for AI Agents

Enterprise systems were designed around deterministic execution: a request is issued, a response is returned, and the transaction ends. This model works for human-driven workflows, but it breaks down under agentic execution. However, autonomous agents operate across time, tools, and uncertainty. A single task may span multiple systems, pause while waiting on external conditions, resume after validation, or partially complete before encountering constraints. In synchronous architectures, these interruptions cause context loss, silent failures, and retries that cannot be correlated back to intent.

When a enterprise wants to operate agents reliably in production, they must move away from blocking request-response patterns and adopt execution models that assume interruption, delay, and recovery as normal conditions. The following pillars outline the minimum requirements for production-grade AI agent infrastructure in 2026.

Fix 1: Semantic Telemetry

Traditional observability was built for human operators. Logs, metrics, and traces are optimized for dashboards, alerts, and post-incident analysis, not for autonomous systems that must reason about failures in real time.

Agentic systems require telemetry that can be consumed and interpreted by machines, not just visualized by humans. Flat log lines and numeric counters are insufficient when an agent needs to understand why an action failed and what corrective step is appropriate.

Semantic telemetry enriches signals with machine-readable context: intent, entity relationships, failure causes, and downstream impact. Instead of emitting a generic error code, systems expose structured explanations that an agent can parse, reason over, and act upon.

Example shift:

From:

Error 500: null pointer exception

To:

Vendor lookup failed because the “last_updated” field was null, preventing a valid match. Procurement workflow paused before vendor ID resolution.

Execution impact:

With semantic telemetry, agents can distinguish between transient failures, data-quality issues, and authorization constraints. This enables targeted retries, alternative execution paths, or escalation, without human intervention.

Operational outcome:

Mean time to recovery is reduced not because incidents disappear, but because resolution shifts from human interpretation to automated correction.

Fix 2: Workflow Level Correlation

Most enterprise APIs are designed for short-lived, synchronous interactions. State exists only for the duration of the request, and failures terminate execution. This model assumes linear workflows and reliable connectivity, assumptions that do not hold for autonomous agents.

Agentic workflows are inherently non-linear. An agent may initiate an action, encounter a permission constraint, wait for an external approval, retrieve alternative data, and then resume execution minutes or hours later. When the state is lost between these steps, agents restart blindly or fail silently. To support this execution model, agentic systems must be built on event-driven architecture rather than blocking calls.

The shift is to replace tightly coupled API calls with asynchronous events routed through a message bus (for example, Apache Kafka or Amazon EventBridge). Each step emits an event that records intent, state, and outcome.

Execution impact:

Agents can initiate long-running tasks, persist execution state, and resume precisely where they left off when downstream conditions are satisfied. Failures become observable checkpoints rather than terminal endpoints.

Operational outcome:

Workflows become recoverable by design. Retries are correlated, partial execution is visible, and agents no longer need to replan entire tasks due to transient interruptions.

Fix 3: Decision Auditability via Context-Rich Metadata

Enterprise data strategies have historically focused on cleanliness and completeness. While necessary, clean data alone is insufficient for autonomous decision-making. Agents require context, not just values. A numeric field or record snapshot does not convey priority, risk, intent, or constraints unless those relationships are explicitly modelled. For example, retrieving a customer balance of $5,000 is meaningless without understanding whether the account is high priority, under dispute, flagged for delinquency, or associated with open support cases. Without this context, agents act on isolated facts rather than business reality.

The shift:

Move beyond flat relational schemas toward context-rich metadata layers built on knowledge graphs and augmented vector metadata. These layers encode business rules, entity relationships, and operational constraints alongside raw data.

Execution impact:

Agents navigate deterministic relationships rather than inferring meaning probabilistically. Decisions are grounded in explicit institutional knowledge rather than pattern matching alone.

Operational outcome:

Hallucination risk is significantly reduced, not by constraining the model, but by constraining the environment in which it operates.

What AI Agent Infrastructure Demands in 2026

Success in AI agent infrastructure in 2026 will be determined less by model sophistication and more by system discipline. As autonomy scales and human oversight diminishes, infrastructure must provide clear observability, workflow-level correlation, and decision auditability. These capabilities form the backbone of reliable autonomous execution.

Closing these observability gaps requires integrated expertise across telemetry, event-driven pipelines, and enterprise data systems, an approach partners such as OnStak apply when building agent-ready infrastructure designed for scale, not isolated AI experiments.

Conclusion

By embedding business context directly into the data layer, organizations reduce the degrees of freedom available to autonomous agents. Decisions are no longer made in isolation or inferred from incomplete signals; they are executed within explicitly defined relationships, priorities, and constraints. This does not eliminate model uncertainty, but it materially limits where uncertainty can manifest, shifting agent behavior from probabilistic interpretation toward bounded, auditable execution.